The traffic numbers look fine. Campaigns are running. The checkout page exists. Product pages have images and descriptions. And yet the conversion rate has barely moved in six months.

This is the most common starting point for businesses that turn to conversion rate optimisation looking for answers. The site isn't broken in any obvious way. There's no error page, no missing call to action, no glaring design failure. But something is wrong, and it's costing real money on every visit that doesn't convert.

The problem, in most cases, isn't the conversion rate itself. It's the order of operations. Businesses jump to testing, redesigning, or rewriting copy before they've identified what's actually causing visitors to leave. The result is a series of experiments that produce noise instead of signal, and an optimisation programme that stays stuck at average.

This guide covers how conversion rate optimisation works when done correctly: diagnosis first, testing second, and measurement tied to real behaviour rather than vanity metrics.

What Conversion Rate Optimisation Actually Measures

Conversion rate optimisation (the American spelling is "optimization"; both refer to the same discipline) is the practice of increasing the percentage of website visitors who complete a defined action. That action could be a purchase, a lead form submission, a free trial sign-up, a phone call, a content download, or any goal the business has set.

The formula is:

Conversion rate = (Conversions ÷ Total visitors) × 100

If 1,000 people visit a landing page and 30 complete the desired action, the conversion rate is 3%.

What this number tells you is limited on its own. Average e-commerce conversion rates sit between 1% and 4% depending on vertical, traffic source, and device type. B2B lead generation pages typically convert between 2% and 5% for cold traffic. SaaS trial sign-up pages range from 1% to 10% depending on offer structure and how much friction exists in the sign-up flow.

The gap between where conversion rates sit and where they could sit is consistently wider than most businesses realise. According to Baymard Institute, whose checkout usability research covers more than 44,000 real-user sessions, the average large e-commerce site has 39 areas of potential checkout improvement, even among Fortune 500 companies that have already run optimisation projects. The issue is rarely that the opportunity doesn't exist. It's that most teams don't know where to look for it.

Why Most Optimisation Programmes Stall

Most businesses start CRO by running an A/B test on their homepage headline or button colour. Neither of these is where the leverage typically sits, and neither is what the evidence recommends doing first.

The failure mode follows a consistent pattern: a team identifies a metric they want to improve, picks the most visible page element they think might be responsible, runs a test, and waits. The result either shows no significant difference or a small lift that doesn't hold after the test ends. The cycle repeats.

What's missing is diagnosis. Running tests without first understanding the root cause of the conversion problem is the equivalent of treating symptoms without identifying the condition.

"You don't have generic problems. You have specific problems." — Peep Laja, founder of CXL

That distinction matters more than most teams realise. Spending testing cycles on elements that aren't causing the conversion problem produces noise, not signal, and optimising based on that noise means the real barrier continues to cost conversions with every visit.

The structured approach that produces repeatable results looks different:

- Identify the specific page or funnel step where conversions are lost

- Determine which type of barrier is causing the drop-off

- Form a hypothesis based on the barrier, not on general opinion about what looks better

- Design a test that validates or invalidates the hypothesis

- Measure lift against a baseline established before any test began

Most CRO programmes skip directly from step one to step four. That gap is where the wasted budget accumulates.

The Five Conversion Barriers That Appear Most Often

Across documented CRO case studies, teams that ran structured site audits before testing identified five barrier categories as the most prevalent causes of conversion loss.

Checkout friction was present in 35% of audited sites. According to Baymard Institute, the average documented cart abandonment rate across e-commerce is 70.19%, aggregated from 49 independent studies. Of shoppers who abandon, 48% cite unexpected extra costs appearing at checkout as the primary reason, and 18% specifically cite a checkout process that was too long or too complicated. Baymard's own research estimates that better checkout design alone could recover $260 billion in lost orders across US and EU e-commerce annually.

Form complexity was flagged in 26% of sites. The average checkout flow contains 23.48 form elements, according to Baymard, compared to an ideal of 12 to 14. Forms with unnecessary fields, ambiguous labels, inline validation errors that fire mid-entry, and no explanation of why certain information is required all contribute to abandonment before submission.

Mobile UX failures appeared in 22% of audited cases. Research from Google's "The Need for Mobile Speed" study found that 53% of mobile site visitors will leave a page that takes longer than three seconds to load. Beyond speed, tap targets too small to use comfortably, horizontal scrolling on product pages, and primary CTAs positioned below the fold on smaller screens consistently depress mobile conversion rates relative to desktop.

Weak trust signals were identified in 18% of audits. Every conversion requires a visitor to extend some form of trust, whether sharing payment information, personal data, or professional time. Pages that lack visible security indicators, verifiable customer reviews, clear return policies, or recognisable brand signals introduce doubt at exactly the moment a visitor is deciding whether to proceed.

Unclear primary calls to action were found on 15% of the sites reviewed. This includes pages with multiple competing CTAs of equal visual weight, button labels that don't communicate what happens next, and pages where the primary action isn't visible without scrolling.

These five barrier types rarely appear in isolation. Most underperforming pages have two or more present simultaneously, which is one reason surface-level testing rarely produces lasting results.

How to Run a Diagnostic Before You Test Anything

A proper conversion rate optimisation audit begins with the data that already exists before anyone changes anything on the site.

"Would you rather have a doctor operate on you based on an opinion, or careful examination and tests? Exactly. That's why we need to conduct proper conversion research." — Peep Laja, CXL

Map the funnel and find the drop-off point

Start by mapping every step a visitor takes from landing on the site to completing the conversion goal. Identify the step with the highest drop-off rate. That step is your first area of investigation. Aggregate traffic data won't tell you which barrier is present, but it will tell you precisely where in the funnel visitors are exiting.

Segment by device type and traffic source

Conversion rates vary significantly across device types and acquisition channels. A funnel converting at 4% on desktop and 1% on mobile has a mobile-specific problem. A landing page converting well for email traffic but poorly for paid search has a message-match problem. Segmentation separates these issues so you're not averaging them into invisibility. Research consistently shows that mobile devices generate more than half of all site traffic in most retail categories, yet complete purchases at significantly lower rates than desktop, a gap that only becomes visible once traffic is properly separated by device.

Review on-page behaviour data

Heatmaps, scroll depth data, and session recordings are not substitutes for structured hypothesis formation, but they surface behavioural signals that analytics alone can't reveal. A form field showing high interaction followed by abandonment is pointing at a specific friction point. A CTA receiving almost no clicks despite being above the fold may have a copy or contrast issue. Use this data to inform hypotheses, not to replace them.

Audit against the five barrier categories

Once you've identified the page or funnel step with the highest drop-off, review it specifically against the five barrier types covered above. This prevents the audit from becoming a general impression exercise and focuses attention on the variables that evidence shows are most likely to affect conversion behaviour.

This sequence, analytics to segmentation to behaviour data to structured barrier audit, takes longer than opening a test and hoping. It also produces tests that are far more likely to generate meaningful results.

Prioritising Fixes: Where to Start

Not all fixes carry equal impact, effort, or reversibility. Prioritisation should run on three criteria.

Evidence strength. How clearly does the data indicate that a specific barrier is causing conversion loss on this specific page? The stronger the evidence, the higher the fix belongs in the queue. A heatmap showing 80% of visitors never reaching the CTA is strong evidence. A general feeling that the page looks dated is not.

Traffic volume. A fix on a page receiving 15,000 visits per month will generate more total lift in absolute terms than the identical percentage improvement on a page receiving 500 visits per month. A 10% conversion rate improvement on the high-traffic page produces 1,500 additional conversions per month. The same improvement on the low-traffic page produces 50. Sequencing fixes by traffic impact rather than implementation convenience consistently produces higher early returns from the same amount of effort. Baymard Institute's research on checkout optimisation illustrates this directly: the average large e-commerce site can gain a 35.26% increase in conversion rate through checkout improvements alone, an outsized return precisely because checkout is the highest-intent page in the funnel.

Implementation risk. Changes to checkout flows or payment processes carry more technical risk than copy revisions or CTA adjustments. Where evidence strength is roughly equal across two fixes, start with the lower-risk option first to generate early data without exposing core transaction flows to unnecessary disruption.

The fixes most consistently over-prioritised without adequate evidence: homepage redesigns, navigation restructuring, and brand-level copy revisions. These are high-effort changes that alter many variables simultaneously, making it nearly impossible to isolate what produced a result.

How A/B Testing Works When Done Correctly

A/B testing is the standard method for validating CRO hypotheses. It works by serving two versions of a page to randomly split traffic simultaneously, then measuring whether a statistically significant difference in conversion rate exists between them.

The most common reason A/B tests produce unreliable results is not poor test design. It's that tests are ended too early, before reaching the sample size required for statistical confidence. A result called at day five on a low-traffic page is almost always noise.

Four conditions produce reliable results.

One variable per test. Testing multiple changes simultaneously makes it impossible to determine which change drove the result. If you change the CTA copy, the button colour, and the hero image at once and observe a lift, you cannot identify which variable caused it and cannot replicate it deliberately.

Sufficient sample size. Reaching 95% statistical confidence at a 10% minimum detectable effect requires approximately 1,000 conversions per variant. For a page converting at 2%, that means at least 50,000 visits per variant before drawing conclusions. Most teams run tests to a fraction of this threshold and call results early.

A defined baseline. Measure your conversion rate for a stable period before running any test. If the rate fluctuates significantly week to week, understand the cause of that variance before introducing test variables into it.

A pre-defined success metric. Determine what constitutes a win before the test starts. Selecting the metric that showed the most improvement after the fact is a form of data distortion, even when unintentional.

The Metrics That Actually Tell You It's Working

The conversion rate number is an outcome metric. Tracking only overall conversion rate makes it difficult to attribute changes to specific causes or to identify early signs of improvement before they appear in the top-line number.

Bryan Eisenberg, whose work helped establish conversion rate optimisation as a discipline and whose clients have included Google, Dell, and Disney, has long argued that businesses treat the website as a funnel when it behaves more like a journey. The distinction matters for measurement: what you track should reflect movement through a decision process, not just whether a final action was completed.

Funnel step completion rates matter more than aggregate conversion rate for diagnostic purposes. Measuring conversion at each step separately allows you to attribute changes in overall performance to specific stages rather than treating the funnel as a single unit.

Micro-conversions provide signal before the primary conversion occurs. Adding a product to cart, initiating checkout, spending meaningful time on a pricing page, or interacting with a lead form are all indicators of forward movement. Tracking these separately shows whether changes at specific funnel stages are producing downstream effects before they appear in the headline number.

Bounce rate by traffic source identifies message-match failures that aggregate bounce rate conceals. A visitor who exits a product page within ten seconds of arriving from a paid search ad has a different problem than a visitor who exits after three minutes of reading. Source-segmented data separates these.

Revenue per visitor matters specifically in e-commerce and SaaS contexts. Performance research consistently shows that a 1-second delay in page response time reduces conversions by 7% on average, but revenue per visitor can move independently of conversion rate if average order value shifts. Tracking both together catches situations where a higher conversion rate comes at the cost of customer quality.

When to Bring in Outside Help

Internal CRO programmes often stall not because of insufficient effort but because of structural constraints. The team running tests is also responsible for the site, which creates a consistent pull toward testing changes the team already believes will work.

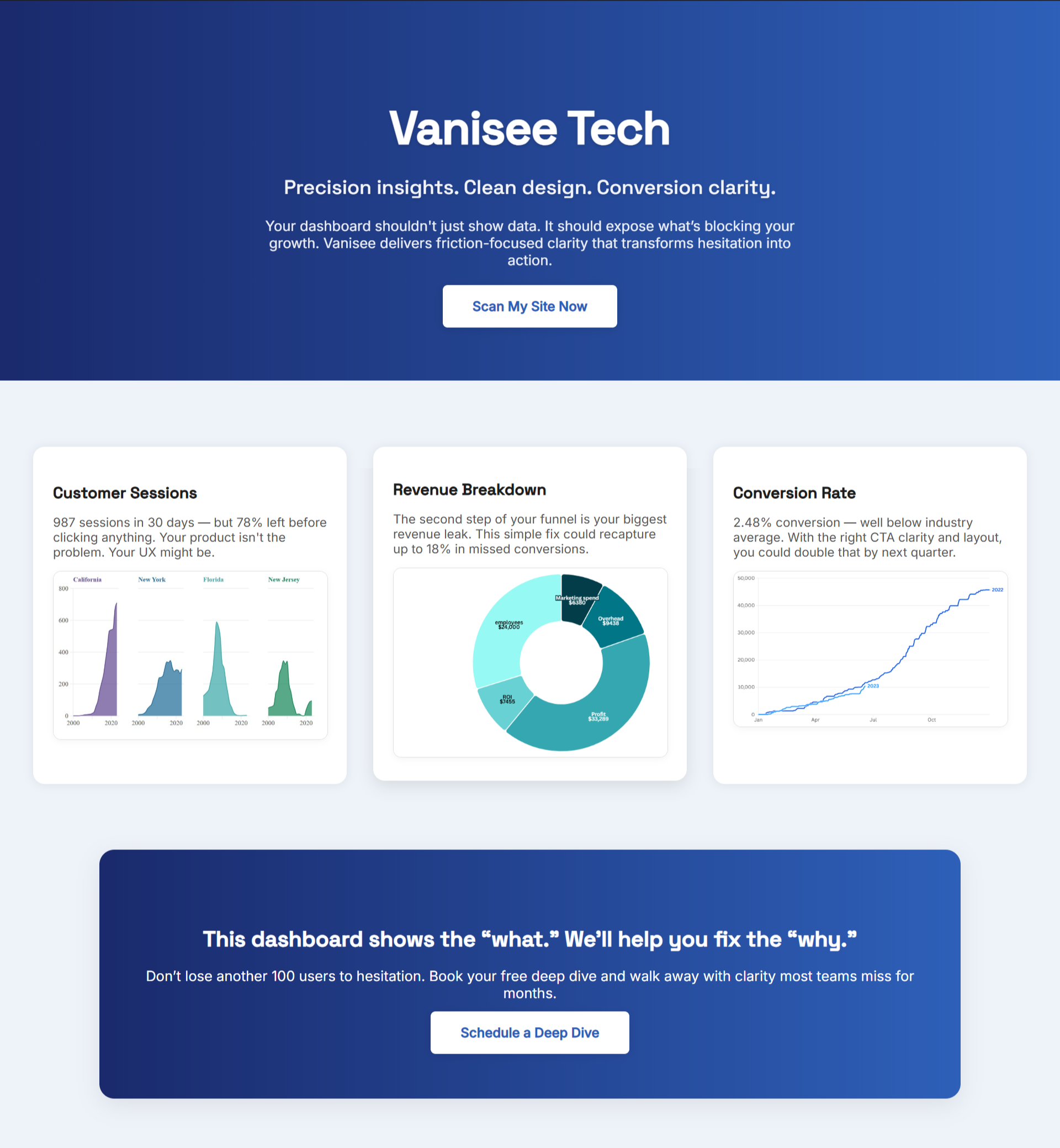

Outside support is most valuable at two specific moments. Automated diagnostic tools can bridge that gap before committing to agency retainers or consultant fees. BluePing, for example, scans a page in less than 30 seconds and surfaces specific conversion barriers against a defined set of criteria — giving teams an objective starting point that internal familiarity tends to obscure. At $395 as a one-time diagnostic, it's designed to answer the question of what to fix before any testing budget gets allocated.

The first is during the diagnostic phase. An independent audit surfaces barriers that internal teams have stopped noticing due to familiarity. What feels intuitive to someone who built the checkout flow is not intuitive to a first-time visitor arriving from a search result. Baymard Institute's benchmark research found that even among Fortune 500 companies that have already run optimisation projects, the average checkout flow still contains 39 identifiable areas for improvement. Familiarity doesn't eliminate problems. It hides them.

The second is when internal testing produces consistently inconclusive results. If multiple tests have run without statistically significant outcomes, the problem is usually one of two things: the wrong variables are being tested, or the baseline conversion rate is too low to detect meaningful differences at current traffic levels. External diagnostic support resolves the first problem. Traffic growth is the solution to the second.

Conclusion

Conversion rate optimisation is not a testing programme. It is a diagnostic practice that uses testing to validate hypotheses generated by structured analysis of real visitor behaviour.

The businesses that see consistent, compounding improvement from CRO invest in diagnosis before experimentation. They know which barriers are present before they build a test to remove them. They measure precisely enough to distinguish a real result from a statistical artefact.

If your current conversion rate feels stuck and the standard advice, test your CTA, add social proof, simplify your headline, hasn't moved the number, the issue is almost certainly that you're treating symptoms without identifying the root cause.

The starting point is the same for every site: find where drop-off is highest, identify the type of barrier causing it, and address that specific barrier before moving to the next.

Want to identify your site's specific conversion barriers in less than 30 seconds? BluePing scans your page against proven conversion criteria and delivers a diagnostic report showing exactly what to fix first.

.png)