Revenue bleeds through gaps your analytics never show. Traffic grows while conversion rates stay flat. Ad spend increases while ROI declines. By the time you hire conversion rate optimization services, six months of compounding friction has already extracted its cost—and hiring the wrong approach means spending another six months fixing the wrong problems.

The decision to hire conversion optimization help typically happens after tolerance breaks: a quarter of declining metrics, a failed A/B test, or a competitor pulling ahead. This timing creates a specific risk—urgency pushing you toward vendors promising quick wins through surface-level changes (new button colors, rewritten headlines, template swaps) instead of systematic diagnosis revealing why conversion architecture is failing.

Baymard Institute's analysis of 5,700+ ecommerce UX studies found that conversion optimization without diagnostic rigor produces temporary lifts that fade within 90 days. The changes worked—briefly—but failed to address structural friction causing the original decline.

"A problem well-defined is half-solved." — Charles Kettering

Why Conversion Rate Optimization Services Fail Without Diagnosis

Services sold on speed ("we'll have tests running in 48 hours") skip the diagnostic phase revealing what's actually broken. Quick deployment means testing hypotheses without evidence—increasing the probability of wasting budget on changes that don't address root causes.

The diagnostic phase doesn't delay results—it prevents expensive detours. Without it, you get:

Month 1-2: Vendor runs tests on surface elements (CTA copy, hero images, layout tweaks)

Month 3: Temporary conversion lift from novelty effects

Month 4-6: Lift fades as fundamental friction reasserts itself

Month 7+: Back to baseline, budget spent, actual problems still undiagnosed

Research from Optimizely on high-performing optimization programs: teams running 50+ A/B tests annually with diagnostic frameworks see sustained improvements. Teams running tests without diagnostic rigor see temporary lifts averaging 2-3% that revert within quarters.

The cost isn't just failed tests—it's the compounding revenue loss while testing symptoms instead of causes.

The Pre-Engagement Diagnostic Framework

Before signing contracts or discussing pricing, demand this diagnostic sequence. Vendors skipping these steps or rushing through them reveal their methodology: cosmetic optimization versus structural fixes.

Phase 1: Revenue Path Analysis (Week 1)

Diagnostic question: Which specific paths generate revenue, and where in those paths does friction extract the highest cost?

Standard conversion optimization services start with "let's improve your homepage" or "let's optimize your product pages." This misses the critical insight: not all pages contribute equally to revenue, and optimizing low-impact pages wastes budget.

The diagnostic identifies:

- Top 5 revenue paths (organic→category→product→cart, paid→landing→product→checkout, direct→search→product→cart, email→product→cart, social→category→product→cart)

- Conversion rate by path segment (what percentage completing each step)

- Revenue concentration (which 20% of paths generate 80% of revenue)

- Friction cost ranking (which drop-off point costs the most in lost revenue)

According to Contentsquare's friction mapping research, businesses identifying high-cost friction points reduce cart abandonment 35% through targeted optimization—versus 8-12% improvement from random testing.

This phase should produce a prioritized list: "Optimizing checkout abandonment on mobile (currently losing $12,000/month) delivers 4x ROI versus homepage optimization (currently losing $3,000/month)."

Vendors unable to articulate this prioritization are guessing about what to optimize.

Phase 2: Conversion Architecture Audit (Week 2)

Diagnostic question: Does current page architecture support purchase decisions, or does it create unnecessary cognitive load and hesitation?

Conversion architecture isn't aesthetics—it's the structural elements enabling decision-making:

- First-screen comprehension: Can visitors articulate the value proposition within 5-7 seconds?

- Proof proximity: Are trust signals (reviews, guarantees, security badges) positioned within one screen of primary CTAs?

- Decision support completeness: Do pages answer objections before they form (shipping costs, return policies, sizing, compatibility)?

- Friction sequencing: Does information architecture require unnecessary steps to reach purchase?

Nielsen Norman Group research: users form website opinions in 50 milliseconds. If conversion architecture doesn't support instant comprehension, every subsequent optimization fights uphill against negative first impressions.

The diagnostic reveals whether current architecture can support optimization or requires structural fixes first. Example findings:

- "Product pages bury pricing 3 scrolls below hero—optimization won't fix this structural issue"

- "Checkout requires 14 fields when industry standard is 6—A/B testing button colors won't overcome this friction"

- "Mobile CTA appears 2.3 screens below fold—traffic growth compounds this architectural problem"

Vendors running tests before fixing structural issues waste budget on changes that can't overcome foundational friction.

Phase 3: Technical Performance Baseline (Week 2)

Diagnostic question: Does technical performance enable or prevent conversion at decision points?

Page speed matters differently depending on where in the funnel it occurs. A slow homepage affects all downstream traffic. A slow checkout loses users ready to purchase. A slow category page reduces product discovery.

Google's research: 53% of mobile users abandon sites loading slower than 3 seconds. But this statistic masks critical context—abandonment rates vary by page position in purchase path and traffic source.

The diagnostic establishes:

- Speed by page type (homepage: 2.1s, category: 3.4s, product: 2.8s, cart: 4.2s, checkout: 3.9s)

- Speed by traffic source (organic: 2.7s average, paid: 3.1s, social: 4.3s, email: 2.4s)

- Device performance gaps (desktop: 2.3s, mobile: 4.1s)

- Conversion correlation (which speed thresholds correlate with abandon)

Research from Unbounce: improving load time from 5s to 2s increases conversion rates 42% on average—but impact varies dramatically by page type and audience.

The diagnostic reveals optimization sequence: "Checkout speed optimization (current 4.2s, losing 28% of cart viewers) takes priority over homepage speed (current 2.1s, minimal impact on conversion)."

This prevents wasting budget optimizing fast pages while slow pages bleed revenue.

Phase 4: Attribution and Signal Accuracy (Week 3)

Diagnostic question: Does your analytics infrastructure accurately capture the signals determining optimization priority?

Conversion rate optimization services can't optimize what measurement systems don't capture. If analytics show high traffic but low conversion without explaining where and why visitors exit, optimization becomes speculation.

The diagnostic verifies:

- Event tracking completeness: Are micro-conversions (CTA clicks, proof engagement, variant selection, shipping calculator use) captured?

- Funnel accuracy: Do analytics match reality (spot-check 20 random sessions via recordings)?

- Attribution reliability: Can you trace revenue to specific entry points, campaigns, and paths?

- Device segmentation: Are mobile and desktop tracked separately with friction points isolated?

Salesforce research: 80% of customers say experience quality matters as much as products. But experience quality can't be measured without accurate signal capture.

Example diagnostic finding: "Analytics show 2.1% conversion rate but don't capture that mobile users abandon at shipping calculator (missing event tracking). Actual problem: calculator doesn't work on mobile—optimization efforts have been testing around this broken experience."

Vendors starting optimization without verifying measurement accuracy risk optimizing based on incomplete data.

The Engagement Framework Protecting Your Budget

After diagnostic phase completion, demand this structured engagement protecting against wasted spend:

Hypothesis Documentation

Before any test launches, vendor must document:

- Which specific friction point the test addresses

- What evidence from diagnostic supports this priority

- Predicted impact range (conservative, likely, optimistic)

- Success criteria (what metric movement validates hypothesis)

This prevents "let's try this and see" testing consuming budget without learning.

Sequential Testing with Learning Compounding

Tests should build on prior learnings, not scatter across random hypotheses:

- Week 1-2: Test #1 addresses highest-priority friction from diagnostic

- Week 3-4: Test #2 builds on Test #1 learnings OR addresses next priority

- Week 5-6: Test #3 compounds prior learnings OR pivots based on evidence

CXL Institute research: optimization programs with learning compounding frameworks see 3-4x faster improvement than random testing approaches.

Rollback Criteria and Speed

Every test needs predefined rollback triggers:

- Performance decline exceeding -5% triggers automatic revert within 24 hours

- Neutral results (±2%) revert within 7 days

- Only clear winners (>10% sustained lift) ship to 100% traffic

Adobe's research on mature testing programs: 5-10% annual conversion rate improvements come from disciplined winner identification and fast loser elimination—not from keeping marginal tests running.

Revenue Impact Tracking

Monthly reporting must show:

- Cumulative revenue impact from shipped tests

- Budget spent versus revenue generated (ROI calculation)

- Highest-impact optimizations ranked

- Failed hypotheses and learnings extracted

This creates accountability: "We spent $12,000 optimizing checkout flow, generated $47,000 incremental revenue in 90 days, 3.9x ROI."

Red Flags Revealing Weak Conversion Rate Optimization Services

Certain vendor behaviors signal methodology gaps:

Red Flag #1: Immediate Test Proposals Without Diagnosis

"We'll have three tests running by next week" means they're guessing about priorities. Ask: "What diagnostic process identifies these as highest-priority tests?"

Red Flag #2: Portfolio Cases Without Revenue Attribution

"We increased conversion 23% for Client X" without revenue context is meaningless. 23% lift on a page generating $1,000/month differs vastly from 23% on a page generating $100,000/month. Ask: "What was the revenue impact?"

Understanding the difference between tools and services matters here: CRO Tools That Fix Conversion Leaks Most Teams Miss covers the diagnostic tools vendors should use during discovery—if vendors can't articulate their diagnostic toolkit, they're likely guessing about optimization priorities.

Red Flag #3: Template-Based Optimization

"We always start with homepage hero optimization" reveals they're applying templates versus diagnosing your specific friction. Ask: "How do you determine optimization sequence for our specific revenue paths?"

Red Flag #4: Aesthetic-Focused Proposals

"Modern design updates will improve conversion" confuses aesthetics with architecture. Modern design doesn't fix structural friction. Ask: "What friction points does this design change address?"

Red Flag #5: No Rollback Process

"We'll monitor results and adjust as needed" is too vague. Tests failing to deliver need fast rollback preventing revenue loss. Ask: "What criteria trigger automatic rollback, and how quickly does it execute?"

These red flags don't mean the vendor is incompetent—they reveal methodology prioritizing activity over outcomes.

What Success Looks Like in First 90 Days

Conversion rate optimization services delivering actual value produce specific evidence within 90 days:

Weeks 1-3: Diagnostic Completion

- Revenue path analysis identifying high-value optimization targets

- Conversion architecture audit revealing structural versus cosmetic issues

- Technical performance baseline establishing optimization sequence

- Attribution verification confirming measurement accuracy

Weeks 4-8: Sequential Testing with Learning

- 3-5 tests launched addressing highest-priority friction

- Each test documented with hypothesis, evidence, and success criteria

- Fast rollback of losers, careful scaling of winners

- Learning extraction from both winners and losers

Weeks 9-12: Compounding Impact

- Cumulative revenue lift measurable and attributed to specific changes

- ROI calculation showing spend versus revenue generated

- Second-order optimizations building on first-wave learnings

- Refined diagnostic revealing next-priority friction points

Hotjar's research on behavioral optimization: businesses focusing on systematic friction removal see 2-3x faster results than those running random tests.

Behavioral Signals That Reveal Revenue Loss Before Conversion Rates Drop explores the early-warning indicators that diagnostic frameworks should capture—helping prioritize which friction points cost the most before conversion metrics visibly decline.

If 90 days pass without measurable revenue impact and clear learning attribution, the engagement isn't working.

The Self-Diagnostic Option

Not every business needs to hire conversion rate optimization services immediately. If budget is constrained or conversion issues are recent, this self-diagnostic sequence identifies whether internal optimization is viable:

Week 1: Map your top 3 revenue paths using Google Analytics (Source → Landing → Product → Cart → Purchase)

Week 2: Calculate conversion rate for each path segment (what percentage complete each step)

Week 3: Identify the single highest-cost drop-off (segment with largest visitor volume × lowest conversion rate)

Week 4: Run 10 session recordings of users hitting that drop-off point

Week 5: Document the top 3 friction patterns observed (what specifically causes hesitation or abandonment)

Week 6: Implement one friction fix and measure impact over 14 days

If this self-diagnostic reveals obvious structural problems (broken checkout, missing mobile optimization, unclear value proposition) and you have internal resources to fix them, hiring services can wait until you've addressed low-hanging fruit.

If the diagnostic reveals complex friction (attribution problems, technical performance issues, conversion architecture gaps requiring specialized knowledge), services deliver ROI faster than trial-and-error internal work. A CRO Audit Tool Framework That Finds What Testing Misses provides a systematic internal diagnostic method for teams running preliminary assessments before hiring.

When to Hire Versus When to Wait

Conversion rate optimization services deliver highest ROI in specific scenarios:

Hire when:

- Diagnostic reveals friction beyond internal expertise (technical performance, conversion architecture, systematic testing)

- Revenue scale justifies investment (monthly revenue >$50,000 makes service fees economically viable)

- Internal resources are constrained (optimization competes with product development and operations)

- Timeline pressure exists (competitive threat, seasonal peak, funding requirements)

Wait when:

- Self-diagnostic reveals obvious fixes within internal capability (broken forms, missing mobile optimization, unclear messaging)

- Revenue scale doesn't justify service fees (monthly revenue <$20,000 makes DIY more economical)

- Recent changes haven't had time to show impact (wait 60-90 days for changes to mature before hiring services)

- Attribution infrastructure is broken (fix measurement first or services optimize blindly)

The decision isn't "good vendor versus bad vendor"—it's "right timing and fit versus premature hiring."

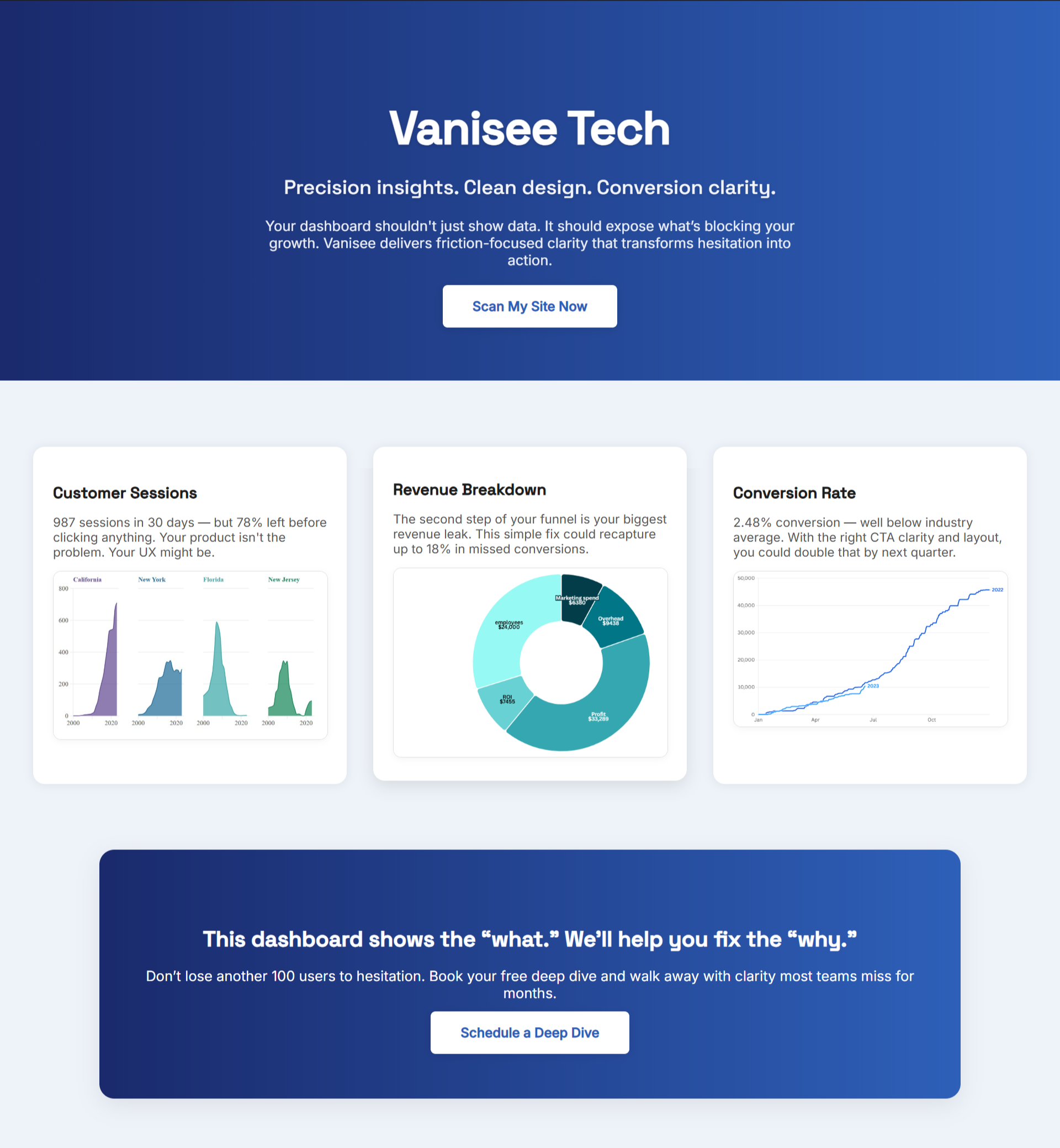

How BluePing Accelerates the Diagnostic Phase

The diagnostic framework described above typically requires 3-4 weeks when executed manually: analytics analysis, session recording review, technical performance testing, conversion architecture audit.

BluePing compresses this diagnostic timeline into minutes. It scans live pages and identifies:

- Conversion architecture gaps: First-screen clarity issues, proof positioning problems, decision support deficiencies

- Technical performance impact: Which slow-loading elements affect decision points

- Friction point priorities: Ranked by revenue impact potential

- Mobile-specific issues: Layout problems, tap target failures, form friction

This diagnostic output serves two purposes:

- If hiring services: Provides vendor-agnostic baseline for evaluating proposals (do their recommendations align with diagnostic evidence?)

- If optimizing internally: Prioritizes highest-impact fixes preventing wasted effort on low-value changes

The scan creates accountability: vendors can't propose cosmetic changes when diagnostic evidence shows structural friction. Internal teams can't waste time on low-priority optimizations when high-cost friction is documented.

.png)

.png)

.png)