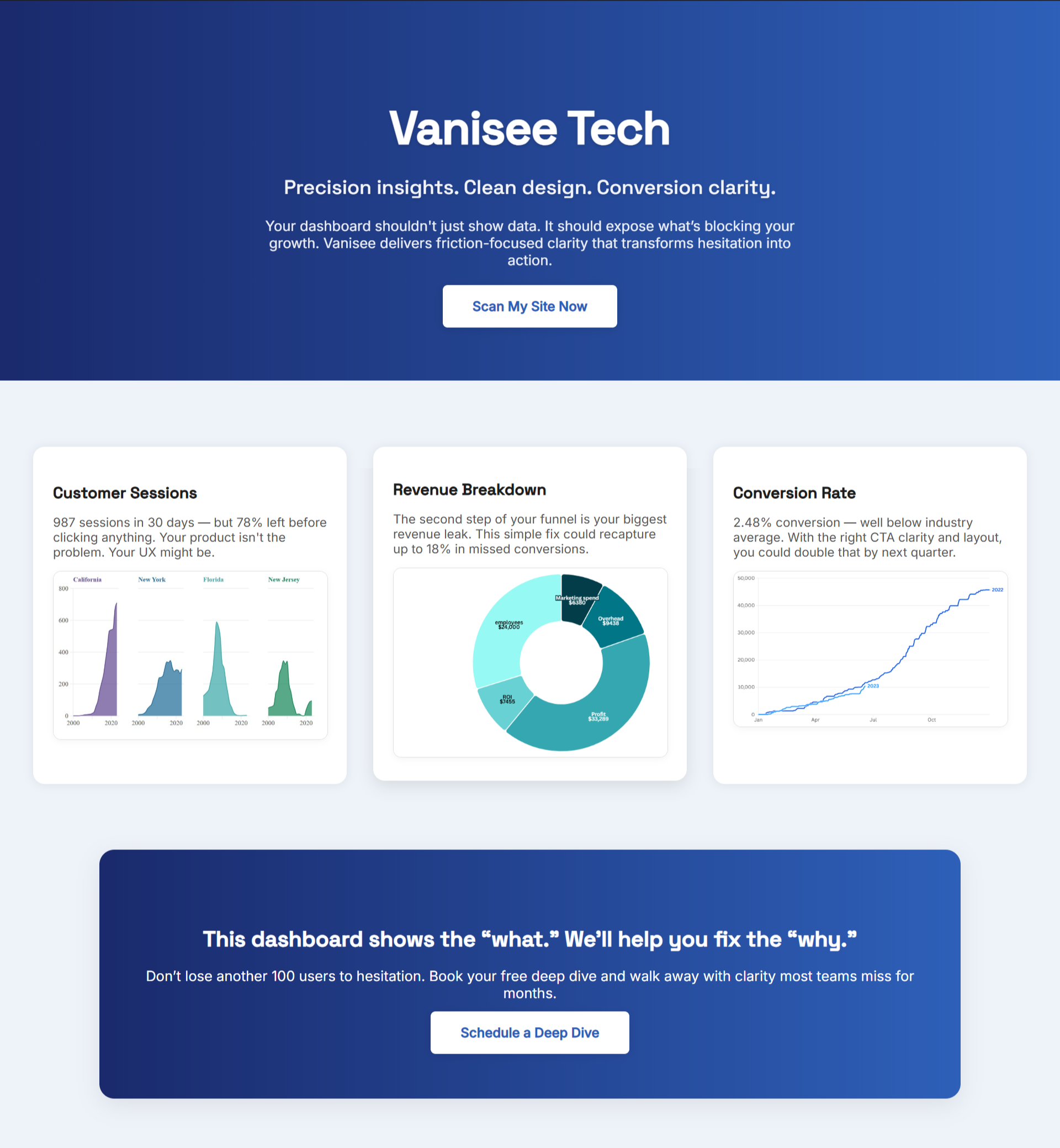

Monthly tool spend: $2,400. Heatmap software: $299. Session recording: $199. A/B testing platform: $899. Analytics suite: $399. Form analytics: $149. Conversion tracking: $299. Total investment: $28,800 annually.

Revenue lift attributed to tool insights last quarter: unmeasured. Tests shipped from tool data: 3. Conversion improvements tied to specific tool findings: unclear. ROI calculation: nonexistent.

The renewal decision arrives in 30 days. Sales team promises "essential insights" and "industry-leading features." But the financial question remains unanswered: did these tools generate more revenue than they cost?

Without measurement discipline, CRO tool budgets grow while actual conversion impact remains invisible. Tools multiply—heatmaps, session recordings, form analytics, user testing platforms, attribution software—each promising visibility into conversion barriers. Yet most teams cannot demonstrate that any single tool paid for itself.

This creates a specific financial risk: paying thousands monthly for tools that provide activity (data dashboards, reports, recordings) without accountability (revenue outcomes, conversion improvements, measurable ROI).

"What gets measured gets managed." — Peter Drucker

The CRO Tool Cost Problem Most Teams Ignore

Tool vendors sell on capabilities: "See every click," "Understand user intent," "Identify friction points." These features matter—but only if they translate into measurable conversion improvements exceeding tool costs.

The financial reality: teams testing 10+ A/B variations achieve 86% better conversion results than those running fewer tests. But this statistic hides critical context—did the tool enable those tests, or would the team have run them regardless? Attribution between tool capability and actual business outcomes determines whether monthly subscriptions justify their expense.

Research on high-performing optimization programs reveals teams running 50+ A/B tests annually with systematic frameworks see sustained conversion improvements. But "systematic framework" doesn't require expensive tools—it requires disciplined hypothesis testing, regardless of software stack sophistication.

The cost calculus: if your current tool stack costs $2,400 monthly ($28,800 annually) and your monthly revenue is $150,000, tools must drive conversion improvements generating more than 1.6% additional revenue just to break even. A 5% conversion lift on baseline traffic paying back tool costs requires measuring whether that lift came from tool-enabled insights or changes the team would have identified regardless.

The Three-Tier Tool Cost Framework

CRO tools fall into distinct financial categories based on both subscription cost and implementation complexity. Understanding these tiers reveals which tools justify their expense and which represent budget waste.

Tier 1: Foundation Tools ($0-500/month)

Google Analytics, Hotjar Starter, Microsoft Clarity (free)

These tools provide basic traffic analysis, simple heatmaps, and session recordings at minimal cost. Their ROI threshold is low—they only need to enable a single meaningful conversion test annually to justify expense.

Breakeven requirement: One test producing 0.5-1% conversion lift

Red flag: If foundation tools sit unused for 60+ days, downgrade or eliminate. Free alternatives (Microsoft Clarity for session recordings, Google Analytics for traffic analysis) deliver comparable insights without subscription costs.

Tier 2: Optimization Tools ($500-1,500/month)

Optimizely, VWO, Hotjar Business, FullStory, Heap

Mid-tier tools enable multivariate testing, advanced segmentation, and detailed user journey analysis. Their higher cost demands measurable conversion improvements—not just data collection.

Breakeven requirement: Monthly conversion improvements generating 2-3x tool cost in additional revenue

Research on form optimization shows reducing form fields from 11 to 4 increases conversions by 120%. If mid-tier form analytics software costs $800 monthly and identifies this optimization on a checkout generating $50,000 monthly revenue, the tool pays for itself if the insight wouldn't have occurred through other means.

The critical question: would your team have discovered the same optimization through free tools, user feedback, or basic analytics? If yes, the premium tool isn't earning its cost.

Tier 3: Enterprise Tools ($1,500+/month)

Adobe Target, Quantum Metric, ContentSquare, Decibel

Enterprise platforms combine attribution modeling, predictive analytics, AI-driven insights, and cross-device tracking. Their premium pricing demands proof of high-value conversion improvements—not feature lists.

Breakeven requirement: Quarterly revenue lift exceeding 5x annual tool cost

Example: Enterprise attribution software costs $3,000 monthly ($36,000 annually). To justify this expense, the tool must enable conversion improvements generating $180,000+ additional annual revenue. This requires tracking specific attribution insights that led to tests producing measurable, sustained conversion lifts.

Without this measurement discipline, enterprise tools become expensive dashboards displaying data that free alternatives capture equally well.

The ROI Calculation Framework Revealing Tool Value

Tool ROI requires connecting specific tool insights to conversion tests producing measurable revenue impact. This four-step framework exposes which tools earn their cost and which consume budget without return.

Step 1: Hypothesis Attribution (Week 1)

Before running any conversion test, document the source of the hypothesis:

- Tool-derived hypothesis: Specific insight only available through paid tool (heatmap revealing unexpected click patterns, session recording showing friction point, form analytics identifying field abandonment)

- Alternative-source hypothesis: Insight available through free tools, customer feedback, support tickets, or team observation

Example tracking:

Test #1: Reduce checkout fields based on form analytics showing 68% abandon at shipping address

Tool source: Hotjar Form Analytics ($149/month)

Alternative availability: No—form-level abandonment data unavailable in Google Analytics

Attribution: Tool-enabled

Test #2: Improve mobile CTA contrast based on low mobile click-through

Tool source: Google Analytics (free)

Alternative availability: Yes—mobile conversion data visible in free analytics

Attribution: Not tool-dependent

This attribution reveals whether premium tools provide insights unavailable elsewhere. If 80% of test hypotheses come from free alternatives, premium tools aren't earning their subscription fees.

Step 2: Revenue Impact Measurement (Week 2-6)

Track conversion improvements from tool-attributed tests:

Formula:

Tool ROI = (Revenue lift from tool-attributed tests - Tool cost) / Tool cost × 100

Example calculation:

Hotjar Form Analytics ($149/month):

- Test #1 (tool-attributed): Checkout field reduction

- Baseline conversion: 2.1%

- Post-test conversion: 3.8%

- Traffic: 12,000 monthly visitors

- Average order value: $85

- Monthly revenue lift: (3.8% - 2.1%) × 12,000 × $85 = $17,340

- Monthly ROI: ($17,340 - $149) / $149 × 100 = 11,483%

This test alone justifies annual Hotjar subscription. The tool paid for itself 115x over in month one.

Optimizely A/B Testing ($899/month):

- Tests run: 8 monthly

- Tool-attributed tests: 2 (remaining 6 used hypotheses from free analytics/user feedback)

- Revenue lift from tool-attributed tests: $4,200 monthly

- Monthly ROI: ($4,200 - $899) / $899 × 100 = 367%

Tool justifies cost but attribution reveals most tests don't require premium platform—team could run 75% of tests through free alternatives.

Adobe Target ($3,000/month):

- Tests run: 12 monthly

- Tool-attributed tests: 1 (AI-driven personalization insight)

- Revenue lift from tool-attributed tests: $2,100 monthly

- Monthly ROI: ($2,100 - $3,000) / $3,000 × 100 = -30%

Tool loses money monthly. Features don't translate to insights unavailable through cheaper alternatives.

Step 3: Opportunity Cost Analysis (Week 7)

Compare tool spend against alternative uses:

If Adobe Target costs $36,000 annually but delivers negative ROI while Hotjar ($1,788 annually) delivers 11,483% ROI, reallocating Adobe budget to additional Hotjar seats for team members, user testing budget, or contractor time for test implementation produces better returns.

Opportunity cost questions:

- Would contractor hours implementing more tests (at $75-150/hour) produce better ROI than premium tool features?

- Would additional team training on free tools ($2,000 one-time) eliminate need for premium subscriptions?

- Would user testing budget ($299/month for UserTesting.com) provide higher-value insights than session recording upgrades?

Step 4: Tool Consolidation Decision (Week 8)

Review tool stack for overlapping capabilities:

Redundancy audit:

- Heatmaps: Available in Hotjar, Microsoft Clarity (free), Google Analytics

- Session recordings: Available in Hotjar, FullStory, Microsoft Clarity (free)

- Form analytics: Available in Hotjar, Google Analytics (with events)

- A/B testing: Available in Optimizely, Google Optimize (free), VWO

If three tools provide session recordings but only one (premium subscription) gets used regularly, eliminate redundant subscriptions.

Data shows companies with 31-40 landing pages generate 7 times more leads than those with fewer pages—but this metric applies to content creation, not tool proliferation. More tools ≠ more tests ≠ better results. Discipline in running systematic tests matters more than dashboard sophistication.

The Signals Revealing Tool Underperformance

Certain patterns expose tools consuming budget without delivering conversion improvements:

Signal #1: High Tool Usage, Low Test Volume

Symptom: Team logs into tool daily, reviews dashboards weekly, runs 1-2 tests quarterly

Financial impact: Tool provides activity (data review) without outcomes (tests shipped)

Fix: Set minimum test velocity threshold—if tool costs >$500/month, require 4+ tool-attributed tests quarterly or downgrade

Signal #2: Test Hypotheses Don't Require Premium Features

Symptom: 80%+ of tests use hypotheses from free analytics, customer feedback, or team intuition

Financial impact: Premium tools sit unused while free alternatives drive actual testing

Fix: Track hypothesis sources for 90 days. If <30% of tests require premium tool insights, cancel subscription

Signal #3: Tool Reporting Replaces Test Execution

Symptom: Team spends hours creating reports from tool data, ships tests quarterly

Financial impact: Tool becomes documentation system instead of conversion improvement accelerator

Fix: Measure hours spent in tool vs. tests shipped from tool insights. If ratio >10:1 (10 hours analysis per 1 test shipped), tool wastes time

Signal #4: Vendor-Reported ROI Doesn't Match Internal Measurement

Symptom: Vendor claims "customers see average 40% conversion lift" but internal tests show 2-5% improvements

Financial impact: Marketing claims vs. measurable reality gap indicates tool oversold

Fix: Demand vendor provide methodology behind ROI claims. If unverifiable or based on best-case scenarios, discount claims 80%

Signal #5: Tool Insights Duplicate Free Alternative Capabilities

Symptom: Premium tool reveals "page load time impacts conversion" (available in free Google Analytics speed reports) or "mobile users abandon more" (visible in free GA device reports)

Financial impact: Paying for data visualization of insights free tools already capture

Fix: Compare premium tool reports to free alternatives. If >60% overlap, premium tool isn't delivering unique value

.png)

When Premium Tools Justify Cost vs. When Free Alternatives Win

Not all expensive tools waste budget—but justification requires proof of unique insights producing measurable conversion improvements unavailable through free alternatives.

Premium Tools Justify Cost When:

Capability gap exists between free and paid options:

Example: Form analytics showing field-level abandonment data unavailable in Google Analytics

Time savings exceed cost:

Example: $1,500/month enterprise tool saves 40 team hours monthly (worth $6,000 at $150/hour average) through automated reporting and analysis

Attribution complexity requires advanced tracking:

Example: Multi-touch attribution software ($2,000/month) accurately credits revenue to specific traffic sources, enabling budget reallocation producing $15,000+ monthly lift

AI-driven insights consistently surface non-obvious optimizations:

Example: Machine learning tool identifies segment-specific friction (mobile users from paid social abandon at specific checkout step) invisible in aggregate analytics

Free Alternatives Win When:

Core insights available without subscription:

Example: Google Analytics + Microsoft Clarity provide traffic analysis, heatmaps, session recordings, and funnel visualization at $0/month

Team lacks bandwidth to act on premium insights:

Example: Enterprise attribution platform costs $3,000/month but team ships 2 tests quarterly—insights exceed execution capacity

Test velocity constraints exist upstream of tooling:

Example: Development team bottleneck limits test implementation to 1 test/month regardless of tool sophistication

Budget constraints make tool cost exceed potential revenue impact:

Example: $500/month tool applied to $5,000/month revenue stream cannot justify ROI (would require 10%+ sustained conversion lift)

Research confirms mobile traffic represents 82.9% of landing page visits, meaning mobile optimization matters more than tool sophistication. A free tool identifying mobile friction outperforms an expensive desktop-focused platform shipping no mobile tests.

The Tool Audit Revealing Budget Waste

Run this systematic audit quarterly to expose tools consuming budget without delivering conversion improvements:

Week 1: Usage Tracking

Log every tool login, report view, and feature use for 30 days. Track:

- Total hours spent in each tool

- Features used vs. features paid for

- Reports generated vs. reports acted upon

- Tests shipped directly from tool insights

Red flag: Tool logins <2x/month or feature utilization <30% of subscription capabilities indicates waste

Week 2: Hypothesis Source Attribution

Review last 20 conversion tests. Categorize hypothesis sources:

- Premium tool exclusive insights: 4 tests (20%)

- Free tool insights: 12 tests (60%)

- Customer feedback/support tickets: 3 tests (15%)

- Team intuition: 1 test (5%)

Red flag: If premium tools contributed <40% of test hypotheses, free alternatives deliver equal value

Week 3: Revenue Impact Calculation

Calculate revenue lift from tool-attributed tests using Step 2 framework above. Compare:

- Tool annual cost: $10,788 (Optimizely)

- Revenue lift from tool-attributed tests: $24,600 annually

- ROI: 128%

Decision: Tool justifies cost—but track quarterly to ensure sustained performance

Week 4: Consolidation Opportunity Identification

Map tool capabilities to usage:

Heatmaps:

- Hotjar ($149/month): Used weekly

- Microsoft Clarity (free): Not implemented

- Action: Cancel Hotjar, implement Clarity—save $1,788 annually with equivalent insights

Session Recordings:

- FullStory ($499/month): Used monthly

- Hotjar ($149/month): Overlapping capability unused

- Action: If Hotjar already provides session recordings, evaluate whether FullStory's premium features justify $350/month premium

A/B Testing:

- Optimizely ($899/month): Runs 8 tests/month

- Google Optimize (free): Not utilized

- Action: Pilot Google Optimize for 60 days. If comparable test execution, downgrade to free alternative

The Tool Stack Evolution Preventing Future Waste

Tool needs change as conversion optimization programs mature. Right-sizing stack to current program sophistication prevents paying for capabilities exceeding execution capacity.

Stage 1: Foundation (0-10 tests/quarter)

Recommended stack:

- Google Analytics (free)

- Microsoft Clarity (free)

- Google Optimize (free)

- Total cost: $0/month

Justification: Free tools provide sufficient insights for early-stage programs. Premium tools waste budget when test velocity remains low.

Upgrade trigger: Shipping 10+ tests quarterly with documented hypotheses exceeding free tool capabilities

Stage 2: Growth (10-30 tests/quarter)

Recommended stack:

- Google Analytics (free)

- Hotjar Business ($299/month)

- VWO or Optimizely Starter ($400-600/month)

- Total cost: $700-900/month

Justification: Form analytics and multivariate testing capabilities justify premium subscriptions when test velocity supports ROI

Upgrade trigger: Attribution complexity requires advanced tracking or AI-driven insights consistently surface non-obvious optimizations

Stage 3: Maturity (30+ tests/quarter)

Recommended stack:

- Google Analytics 360 ($150,000/year) or equivalent

- Enterprise testing platform ($1,500+/month)

- Advanced attribution software ($2,000+/month)

- Total cost: $4,000-15,000/month

Justification: High test velocity, complex attribution needs, and large revenue base support enterprise tool ROI

Downgrade trigger: Test velocity drops below 20/quarter or revenue attribution becomes unclear

Red Flags From Vendors Hiding Poor Tool ROI

Certain vendor behaviors signal tools won't deliver measurable conversion improvements justifying their cost:

Red Flag #1: Case Studies Lack Revenue Attribution

Vendor claims "Client X improved conversion 45%" without explaining whether improvement came from tool insights, design changes, pricing adjustments, or seasonal factors. Ask: "What was the revenue impact, and how much was directly attributable to tool-specific insights?"

Red Flag #2: Free Trials Too Short for Meaningful Testing

14-day trials prevent running actual tests to completion (statistical significance requires 2-4 weeks minimum). Vendor banking on commitment before proof. Demand: 60-90 day pilot with clear success metrics defined upfront.

Red Flag #3: Implementation Complexity Exceeds Test Velocity

Tool requires 40 hours of developer time to integrate but team ships 4 tests quarterly. Implementation cost ($6,000 at $150/hour) + subscription ($1,200 quarterly) = $7,200 investment supporting minimal test volume. Tool complexity exceeds program maturity.

Red Flag #4: Feature Lists Without Conversion Impact Examples

Vendor highlights "AI-powered insights" and "real-time segmentation" without showing specific conversion tests these features enabled. Features don't equal outcomes. Demand: Customer references with documented revenue lift from specific tool capabilities.

Red Flag #5: Annual Contracts With Minimal Monthly Commitment

Vendor offers "significant discount" for annual prepayment despite tool being unproven in your environment. Annual lock-in prevents course-correction if tool underperforms. Counter: Monthly billing for first 6 months, then evaluate annual commitment based on documented ROI.

Research shows 53% of mobile visitors abandon sites loading >3 seconds—but identifying this requires basic analytics, not enterprise tools. Premium subscriptions should solve problems free tools cannot address.

How to Actually Prove CRO Tools Pay for Themselves

Most teams guess at tool value. This measurement system provides proof:

Month 1: Baseline Establishment

Action: Run current tools with hypothesis source trackingMeasure:

- Tests shipped: 6

- Tool-attributed hypotheses: 2 (33%)

- Revenue lift from tool-attributed tests: $8,400

- Tool costs: $2,400

- Preliminary ROI: 250%

Month 2-3: Alternative Testing

Action: Run identical test velocity using free tool alternativesMeasure:

- Tests shipped: 6 each month (12 total)

- Tool-attributed hypotheses: 4 (33%)—similar ratio with free tools

- Revenue lift: $16,200

- Tool costs: $0 (free alternatives)

- Alternative ROI: Infinite (no cost)

Insight: If free tools deliver comparable test volumes and revenue impact, premium tools aren't justifying expense

Month 4: Decision Point

Compare performance:

Premium stack (Month 1): 250% ROI, $8,400 revenue lift, $2,400 cost

Free stack (Month 2-3 average): Infinite ROI, $8,100 average monthly revenue lift, $0 cost

Decision: Free alternatives deliver 96% of revenue impact at 0% of cost. Cancel premium subscriptions, reallocate $28,800 annual savings to test implementation budget or team training.

Exception: If premium tools surfaced unique insights producing outsized revenue impact (1 test generating $50,000+ lift vs. free tool tests averaging $8,000), premium cost justified despite lower overall test volume.

Why BluePing Reveals Tool ROI Gaps Other Software Misses

Traditional CRO tools measure what happened (clicks, scrolls, conversions) without connecting findings to revenue outcomes. This creates illusion of value—dashboards showing data without proving the data led to conversion improvements exceeding tool costs.

BluePing addresses this gap by identifying conversion friction points tied to specific page elements, enabling:

Direct hypothesis attribution: Each friction point surfaces as testable hypothesis with clear revenue impact if resolved

ROI forecasting: Estimated revenue lift from fixing identified friction vs. cost to implement test reveals expected ROI before shipping

Tool necessity evaluation: If BluePing identifies friction points visible in free analytics, premium tools aren't delivering unique value

Example: BluePing scans checkout flow and identifies:

- Mobile CTA below fold (impacts 82.9% of traffic based on mobile visitor rates)

- Form friction: 11 fields vs. industry benchmark of 4 (research shows 120% conversion increase when reducing to 4 fields)

- Missing trust signals near payment button (76.8% of marketers overlook social proof placement)

Each identified friction point includes:

- Revenue impact estimate (based on traffic volume and conversion lift research)

- Implementation complexity (design-only vs. development required)

- Free tool alternative (could Google Analytics + manual review identify this?)

This enables calculating whether premium tools would have surfaced same insights:

Microsoft Clarity (free): Identifies CTA position through scroll heatmaps

Google Analytics (free): Identifies form abandonment rates (but not field-level data)

Premium form analytics ($149/month): Identifies specific field causing abandonment

ROI decision: Premium form tool justified if field-level insight enables test producing >$1,788 annual revenue lift (breakeven). BluePing's revenue impact forecast ($12,000 annual lift from form optimization) proves tool justifies cost.

.png)

.png)