Four weeks into an A/B testing program, sixteen variations tested, $12,000 spent on designer fees and testing platform subscription—and conversion rate stayed flatlined at 1.8%. The variations tested button colors, headline phrasing, image positioning, form length. None addressed the actual problem: structural design mistakes that prevented visitors from understanding the offer before they even reached the test elements.

The testing program measured surface-level changes while foundational design flaws—invisible value proposition, buried trust signals, mobile layout collapse—blocked conversion regardless of which variation won. The A/B tests answered the wrong questions because the page design prevented visitors from progressing far enough to encounter the tested elements.

According to research analyzing landing page performance, the median conversion rate across industries is 6.6%, yet nearly 48% of website visitors exit the main landing page without any further interaction. The gap between potential and actual performance often stems from structural design mistakes that prevent meaningful A/B test results—teams optimize button colors on pages where visitors can't comprehend the offer.

The framework below identifies design mistakes that prevent conversion before testing begins—structural flaws requiring fixes, not variations.

"Good design is obvious. Great design is transparent." — Joe Sparano

Why Landing Page Design Mistakes Make A/B Testing Worthless

Traditional A/B testing workflow assumes the landing page foundation works:

Step 1: Identify element to test (headline, CTA button, hero image)

Step 2: Create variation (blue button vs orange button)

Step 3: Split traffic 50/50 between control and variation

Step 4: Measure conversion rate difference

Step 5: Implement winner

This workflow fails when structural design mistakes prevent visitors from reaching the tested element. Testing headline variations when value proposition remains incomprehensible wastes budget. Testing CTA button colors when visitors abandon before scrolling to the button produces meaningless data.

The design-first workflow:

Step 1: Audit for structural design mistakes blocking comprehension, trust, progression

Step 2: Fix documented mistakes (not test them—these aren't variations, they're flaws)

Step 3: Establish conversion baseline with functional design

Step 4: Then run A/B tests on optimization opportunities

Step 5: Validate improvements against functional baseline

The difference: fixing mistakes creates functional foundation, testing optimizes functional pages.

VWO case study demonstrates this principle: after identifying usability issues in the conversion funnel, navigation menu redesign produced 62.9% revenue increase. The diagnostic revealed documented problems, fixes targeted actual friction. Testing variations before identifying structural issues would have produced noise masking the signal.

The Seven Fatal Landing Page Design Mistakes

These mistakes prevent conversion before any test element matters:

Mistake 1: Value Proposition Buried Below Fold

The mistake: Most important information (what you offer, who it's for, why it matters) appears only after scrolling—invisible to visitors who never scroll.

Why it kills conversion: Visitors decide whether to engage within 3-5 seconds of page load. Value proposition below fold means decision happens without information. Visitor bounces before discovering whether offer solves their problem.

How to diagnose:

- Heatmaps showing attention patterns in first 3 seconds

- Scroll depth analysis (what percentage reach value proposition?)

- Session recordings filtered for <10 second visits

Example: SaaS landing page leads with generic "Welcome to Our Platform" headline above fold. Actual value proposition ("Reduce manual data entry 80% without changing your process") appears three scrolls down. 71% of visitors bounce before seeing it.

Value proposition moved above fold: bounce rate drops 71% → 43%.

Research shows the probability of bounce increases 32% as page load time goes from 1 second to 3 seconds, and increases 90% when going from 1 to 5 seconds. But technical speed isn't everything—comprehension speed matters equally. Value propositions requiring >5 seconds to understand produce bounce patterns similar to slow-loading pages even when technical performance is fast.

Mistake 2: Trust Signals Positioned After Conversion Action

The mistake: Customer testimonials, security badges, credibility indicators appear at page bottom—after primary CTA. Visitors asked to convert before receiving proof.

Why it kills conversion: Trust develops progressively as visitors consume page. Conversion request without prior trust establishment triggers skepticism. Moving trust signals after CTA means decision moment occurs while doubt remains unresolved.

How to diagnose:

- Heatmaps showing scroll depth when visitors exit

- Session recordings tracking scroll behavior before abandonment

- Element positioning audit (where does trust appear relative to CTA?)

- Survey data asking why visitors didn't convert (lack of trust common answer)

Example: Ecommerce product page structure: product image, price, specifications, "Add to Cart" button, then customer reviews at bottom. 31% add-to-cart clicks, 68% abandon without completing purchase.

Reviews repositioned above "Add to Cart": clicks stay 31%, but completion rate jumps to 54%. Same visitors, same button, different trust positioning.

Analysis of top landing pages reveals 76.8% of marketers overlook social proof on their pages despite documented effectiveness. Among successful local landing pages, 36% feature customer testimonials, 28% address common questions, and 30% include videos—all positioned strategically before conversion requests.

Mistake 3: Mobile Design Collapses Critical Elements

The mistake: Desktop design responsive but mobile version pushes important elements (value proposition, CTA, trust signals) multiple screens below fold through stacking.

Why it kills conversion: Mobile visitors scroll less than desktop. Research shows mobile visitors account for 82.9% of landing page traffic compared to only 17.1% desktop traffic. Elements three mobile screens down effectively invisible.

How to diagnose:

- Device-segmented conversion rates (mobile underperforming desktop by >30% suggests layout issues)

- Mobile-specific heatmaps and recordings

- Manual mobile testing on actual devices (simulators miss rendering issues)

- Scroll depth analysis filtered by device type

Example: Desktop landing page: hero image, value proposition, three benefit points, CTA all above fold. Mobile version stacks vertically: hero image fills screen one, value proposition screen two, benefits screen three, CTA screen four.

Mobile conversion: 0.7% vs desktop 3.1%. Mobile layout redesign with compressed hero, value proposition + CTA on screen one: mobile conversion improves to 2.4%.

Additional research shows 53% of mobile visitors abandon if page takes longer than 3 seconds to load, while 47% expect pages to load in 2 seconds or less. Mobile optimization requires addressing both speed and layout simultaneously.

Mistake 4: Form Friction From Unnecessary Fields

The mistake: Forms requesting information unnecessary for conversion decision (company size, job title, how did you hear about us) or processing transaction (phone number for digital download, full address for email signup).

Why it kills conversion: Each form field creates abandonment risk. Fields without clear purpose trigger suspicion ("why do they need this?"). Fields requiring thought or effort (dropdowns forcing category selection, long-answer text) create decision fatigue.

How to diagnose:

- Field-level abandonment tracking (which fields cause exit?)

- Session recordings showing hesitation at specific fields

- Completion rate comparison (form starts vs form completes)

- Stakeholder interview (why does each field exist? What happens if removed?)

Example: Webinar registration form: name, email, company, job title, company size dropdown, industry dropdown, how did you hear about us. Form start rate 42%, completion rate 18%.

Form reduced to name + email only: completion jumps to 34%. Follow-up survey sent post-webinar captures other data from engaged audience.

Research on form optimization shows that reducing forms from 11 fields down to 4 fields yielded a 120% increase in conversions. Survey data indicates 30.7% of marketers believe four form questions is the ideal number for best conversion rates. Each additional field beyond this optimal number reduces completion 3-5% on average.

Mistake 5: Page Load Speed Killing Mobile Conversions

The mistake: Page loads acceptably fast on desktop (2-3 seconds) but mobile performance significantly worse (5+ seconds) due to unoptimized images, render-blocking resources, or heavy scripts.

Why it kills conversion: Mobile users show extreme intolerance for delays. Research consistently demonstrates the direct correlation between speed and conversion.

How to diagnose:

- Speed testing tools (PageSpeed Insights, GTmetrix) showing mobile vs desktop scores

- Real user monitoring showing actual load times by device

- Bounce rate analysis segmented by device and load time

- Conversion rate correlation with page weight

Example: Ecommerce site loads in 2.1 seconds on desktop, 6.8 seconds on mobile. Desktop conversion 4.2%, mobile conversion 1.1%. Image optimization and lazy loading reduce mobile load time to 2.8 seconds: mobile conversion improves to 3.1%.

Research analyzing page speed impact on conversion reveals significant patterns:

- Website conversion rates drop by an average of 4.42% for each additional second of load time between 0-5 seconds

- For every second delay in mobile page load, conversions can fall by up to 20%

- A 1 second delay in page load time causes conversion rates to drop by 7%

- 70% of consumers say page speed impacts their willingness to buy from an online retailer

- Pages loading in less than 1 second achieve conversion rates of 31.79%, dropping to 20% at 1 second and 12-13% at 2 seconds

Additional research found that for every second a site loads faster, conversion rate improves by 17%, demonstrating the inverse relationship works both ways.

Mistake 6: CTA Button Generic or Invisible

The mistake: Call-to-action button labeled generically ("Submit," "Continue," "Click Here") or designed to blend with page (low contrast, small size, unclear clickability).

Why it kills conversion: Generic CTAs don't communicate value or next step. Visitor unsure what happens after click hesitates. Invisible CTAs mean committed visitors can't find conversion mechanism.

How to diagnose:

- Click tracking (CTA click rate vs page engagement rate)

- Session recordings showing cursor searching for CTA

- Heatmaps revealing CTA receives minimal attention despite prominent positioning

- User testing asking "what would you click to [desired action]?"

Example: Lead generation page CTA labeled "Submit" with light gray button on off-white background. Click-through rate 9% despite form completion requiring only name and email.

CTA changed to "Get Free Analysis" with high-contrast orange button: click-through jumps to 24%. Same form, clearer value proposition and visual prominence.

Research on CTA effectiveness demonstrates the impact of optimization. Analysis shows that removing navigation links from landing pages produces a 28% lift in conversion rates, while removing the navigation menu entirely can increase conversions by 100%—eliminating competing CTAs focuses visitor attention on primary conversion action.

Mistake 7: Multiple Competing Offers

The mistake: Landing page presents multiple conversion options (download guide + watch demo + schedule call + sign up for newsletter) creating decision paralysis.

Why it kills conversion: Psychology research demonstrates choice overload reduces decision-making. More options seems helpful but fragments focus. Visitors uncertain which action is "right" often choose none.

How to diagnose:

- CTA click distribution (which offers receive clicks?)

- Conversion completion rates by CTA type

- Heatmap showing attention fragmentation across multiple CTAs

- Session recordings showing hesitation patterns

Example: SaaS landing page offers: free trial (primary), schedule demo (secondary), download whitepaper (tertiary), contact sales (quaternary). Overall conversion rate 2.8% fragmented across four actions.

Page redesigned with single primary CTA (free trial) and secondary optional path (schedule demo): primary conversion rate jumps to 5.2%. Eliminating choice paralysis focuses decision.

Research confirms this principle: analysis shows having multiple offers on one landing page can reduce conversions by up to 266%. Additionally, 43.6% of marketers report lead generation as the main goal when creating landing pages—yet multi-offer designs fragment this focus.

Landing Page Optimization: The Diagnostic Framework Before Redesign explores the systematic diagnostic process preventing expensive redesigns—useful for identifying which design mistakes actually block conversion versus surface-level aesthetics.

Cost Comparison: Fix Mistakes vs Run Blind Tests

Real scenarios showing design-first ROI:

Scenario 1: SaaS Company, 2.1% Trial Signup Rate

Testing-First Approach (testing on broken foundation):

- A/B testing platform: $500/month

- Designer creating variations: $4,000 (8 variations over 3 months)

- Developer implementing variations: $2,500

- Total 3-month cost: $8,000

- Tests run: headline (3 variations), CTA button color (2), hero image (3)

- Conversion improvement: 2.1% → 2.3% (0.2pp)

- Winner: blue CTA button vs orange (marginal lift, likely noise)

Design-First Approach:

- Design mistake audit: $800 (freelance UX designer, 8 hours)

- Fixes implemented: $2,200 (designer + developer)

- Value proposition moved above fold

- Trust badges repositioned before CTA

- Mobile layout redesigned

- Form reduced 7 fields → 3 fields

- Total cost: $3,000

- Conversion improvement: 2.1% → 3.6% (1.5pp)

- Then A/B tests produce clearer signals on functional foundation

Analysis: Design-first approach cost 62% less ($3,000 vs $8,000) while producing 7.5x better results (1.5pp vs 0.2pp improvement). Subsequent A/B testing produces valid data because foundation works.

Scenario 2: Ecommerce Store, 1.6% Add-to-Cart Rate

Testing-First Approach:

- 12 weeks A/B testing various product page elements

- Cost: $7,500 (platform subscription + design/dev)

- Tests: product image size, description length, review positioning, CTA wording

- Result: 1.6% → 1.7% (0.1pp improvement, statistical noise)

- Root cause unaddressed: mobile layout pushed CTA three screens below fold

Design-First Approach:

- Mobile layout audit reveals CTA invisibility issue

- Designer compresses hero section, brings CTA to screen one: $1,800

- Additional fixes: contrast improvement on secondary elements, form field reduction

- Total cost: $2,400

- Result: 1.6% → 2.8% (1.2pp improvement)

- Mobile conversion specifically: 0.6% → 2.1% (3.5x improvement)

Analysis: Design-first approach cost 68% less ($2,400 vs $7,500) while producing 12x better results (1.2pp vs 0.1pp). Most impactful: mobile-specific fix addressing actual problem versus blind testing on broken mobile layout.

When Testing Makes Sense vs When Fixing Required

Fix first (these are mistakes, not opportunities):

- Value proposition below fold (not a test, just wrong positioning)

- Trust signals after CTA (chronology error, not variation)

- Mobile layout collapse (responsive failure, not design choice)

- Form fields with no purpose (data collection vs conversion conflict)

- Page speed issues (performance problem, not aesthetic)

- Multiple competing offers (cognitive overload, not optimization variable)

Test after fixing (these are optimization opportunities):

- Headline phrasing variations (all above fold, all clear—which resonates most?)

- CTA button color (high contrast achieved—which performs best?)

- Hero image options (all communicate value—which connects strongest?)

- Benefit order (all visible, all relevant—which sequence converts highest?)

- Page length (foundation works—how much detail optimizes conversion?)

The distinction: mistakes have objectively correct fixes. Optimizations have subjectively better performers requiring testing.

Red Flags From Designers Skipping Mistake Audit

Certain designer behaviors signal landing page design without diagnostic foundation:

Red Flag #1: "Let's A/B test multiple complete designs"

Translation: Designer doesn't know which design mistakes block conversion, shotgunning variations hoping one works. Professional approach: audit mistakes, fix documented flaws, then test optimizations.

Red Flag #2: "We'll make it look more modern and professional"

Translation: Aesthetic redesign without conversion focus. Professional approach: identify structural mistakes preventing conversion, fix systematically.

Red Flag #3: "Trust me, this layout converts better based on experience"

Translation: Designer applying generic patterns without diagnosing your specific mistakes. Professional approach: heatmaps, recordings, scroll depth data revealing your page's actual problems.

Red Flag #4: "We'll handle mobile responsiveness in the next phase"

Translation: Desktop-first design creating mobile conversion disasters. Professional approach: mobile-first or simultaneous design preventing layout collapse.

Strong design partners begin with diagnostic:

- 1-2 weeks analyzing current page performance (heatmaps, recordings, scroll depth, conversion funnel)

- Mistake documentation with severity scoring

- Fix recommendations prioritized by impact

- Implementation roadmap separating fixes from optimizations

- Only then: design variations for A/B testing valid hypotheses

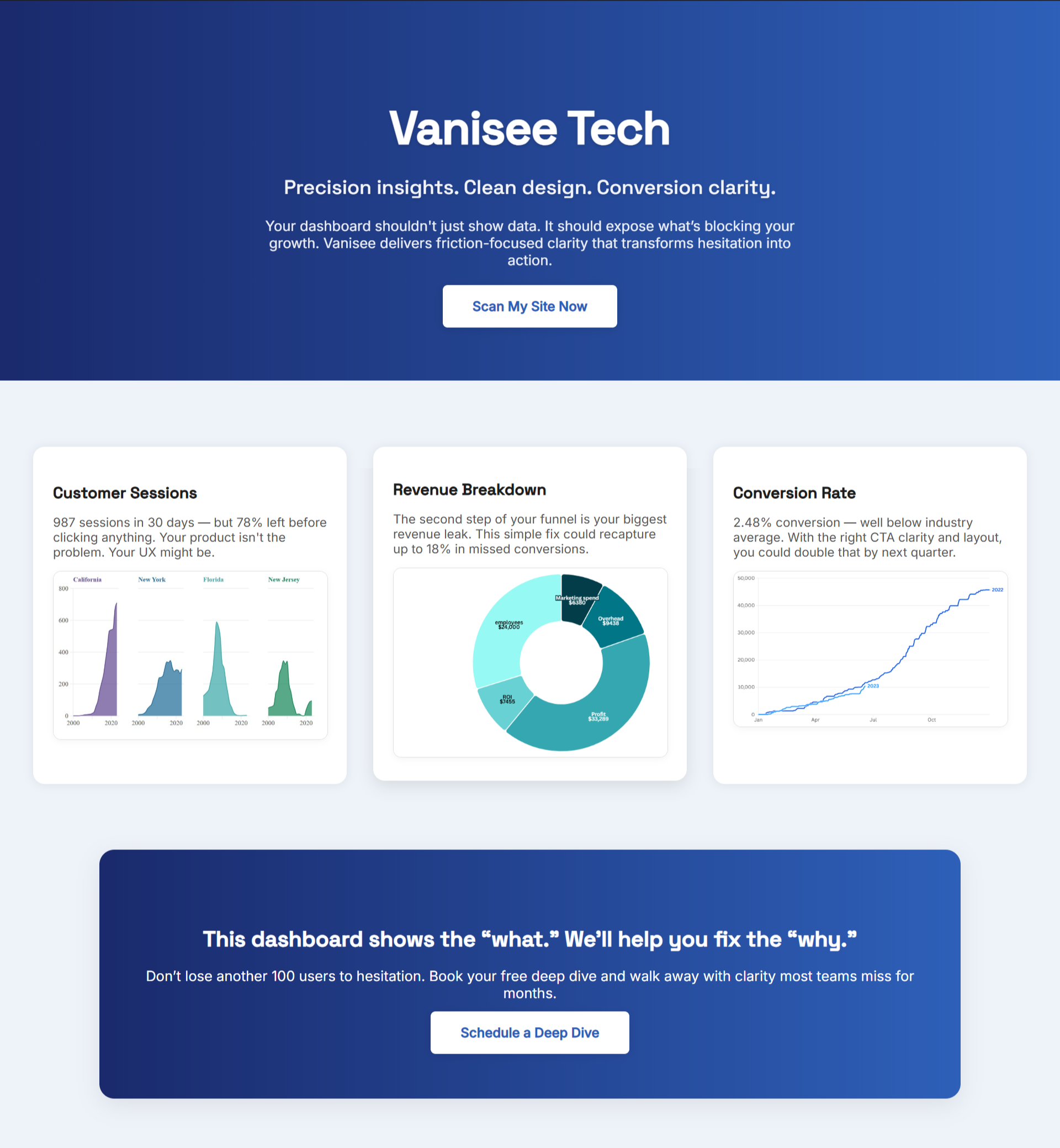

How BluePing Identifies Design Mistakes Before Testing Budget Wasted

Before spending $8,000-$15,000 on A/B testing programs or designer fees, BluePing diagnostic reveals structural mistakes blocking conversion—without revealing the specific methodology that makes BluePing work.

Use cases:

Use Case 1: Prevent testing waste

A/B testing platform proposes 12-week program testing headlines, images, CTA copy. BluePing reveals specific structural issues that would make these tests meaningless. Fix structural mistakes first, saving 8 weeks of testing budget on noise.

Use Case 2: Designer proposal validation

Designer quotes $12,000 for "conversion-optimized redesign." BluePing identifies specific structural mistakes requiring targeted fixes. Request focused corrections versus full redesign, saving $8,000.

Use Case 3: Prioritization when budget limited

Can only afford 2-3 design changes this quarter. BluePing provides data showing which mistakes cost most conversions monthly. Clear prioritization prevents optimizing low-impact elements.

Use Case 4: Pre-testing diagnostic

About to launch A/B testing program. BluePing reveals structural mistakes that would create noise drowning test signals. Fix mistakes first, then testing produces valid data with clear winners.

The diagnostic prevents spending testing budget measuring button color variations on pages where visitors can't comprehend the offer—mistake identification before optimization investment.

Conversion Rate Optimization Services: The Diagnostic Framework Preventing Wasted Spend details the broader conversion diagnostic methodology—applicable for evaluating whether service providers identify actual problems versus implementing generic best practices.

.png)

.png)